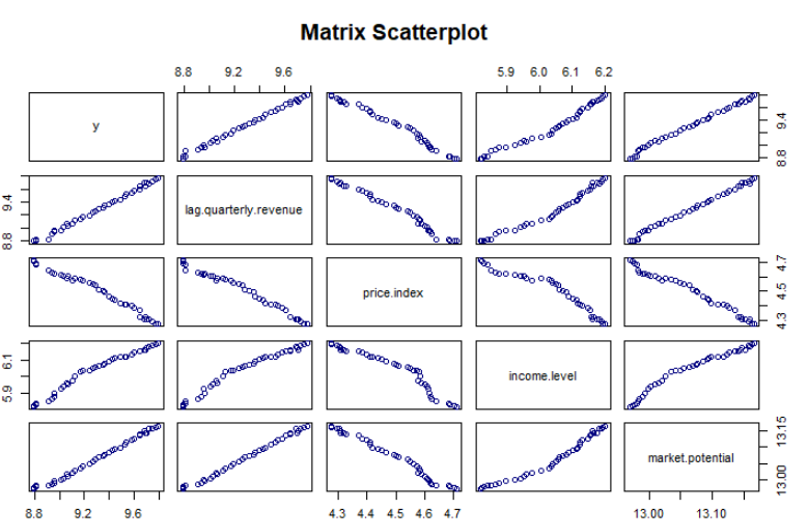

Note that terms corresponding to the variance of both X variables occur in the slopes. For example, X 2 appears in the equation for b 1. In the two variable case, the other X variable also appears in the equation. It's simpler for k=2 IVs, which we will discuss here.įor the one variable case, the calculation of b and a was:Īt this point, you should notice that all the terms from the one variable case appear in the two variable case. The prediction equation is:įinding the values of b is tricky for k>2 independent variables, and will be developed after some matrix algebra. Again we want to choose the estimates of a and b so as to minimize the sum of squared errors of prediction. We still have one error and one intercept. Note that we have k independent variables and a slope for each. We can extend this to any number of independent variables: Where Y is an observed score on the dependent variable, a is the intercept, b is the slope, X is the observed score on the independent variable, and e is an error or residual. With one independent variable, we may write the regression equation as: How is it possible to have a significant R-square and non-significant b weights? What are the three factors that influence the standard error of the b weight? Write a regression equation with beta weights in it. Why do we report beta weights (standardized b weights)? What happens to b weights if we add new variables to the regression equation that are highly correlated with ones already in the equation? multiple regression?ĭescribe R-square in two different ways, that is, using two distinct formulas.

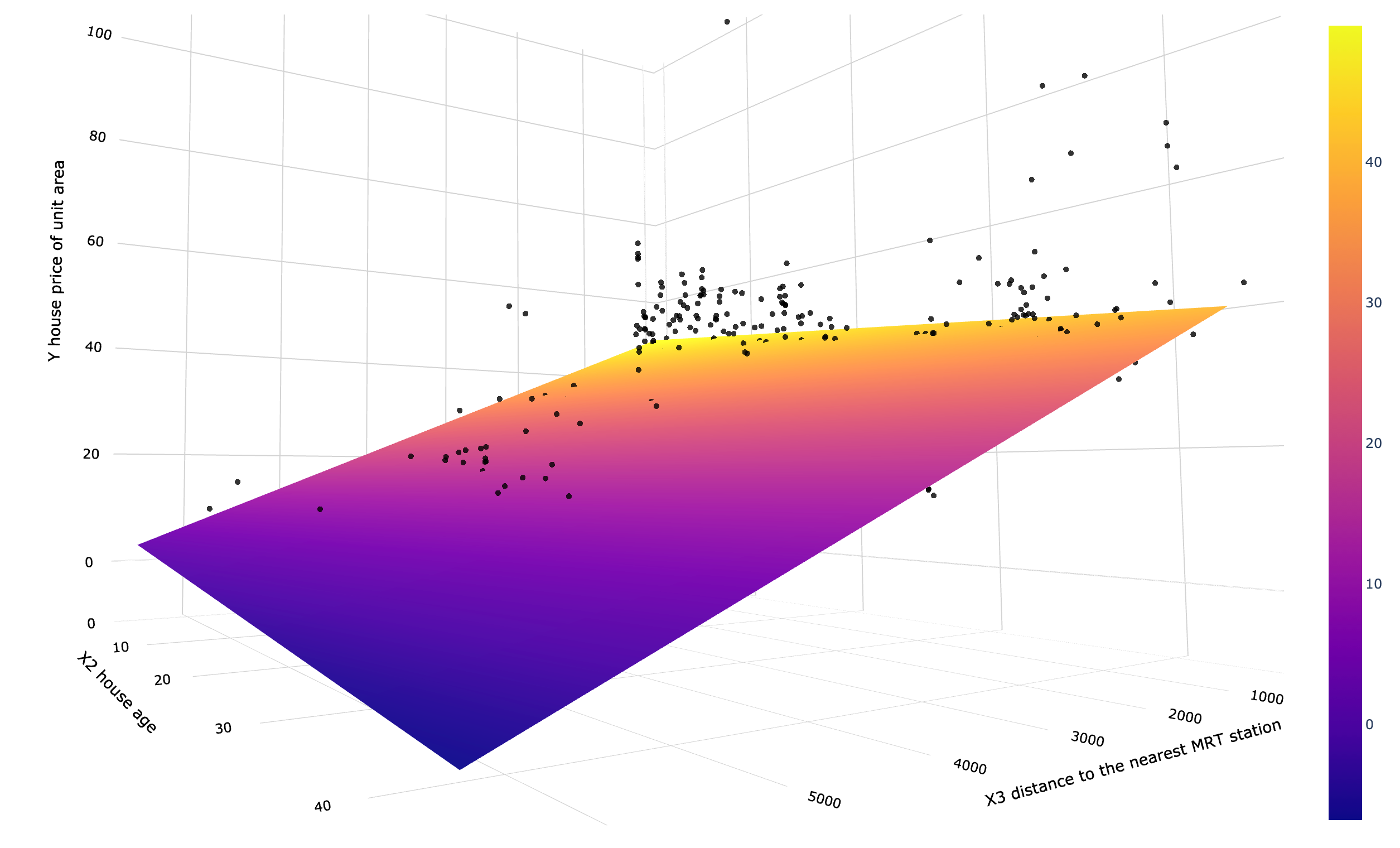

What is the difference in interpretation of b weights in simple regression vs. Write a raw score regression equation with 2 ivs in it. We can use the regression equation created above to predict the mileage when a new set of values for displacement, horse power and weight is provided.įor a car with disp = 221, hp = 102 and wt = 2.91 the predicted mileage is − Lm(formula = mpg ~ disp hp wt, data = input)īased on the above intercept and coefficient values, we create the mathematical equation. When we execute the above code, it produces the following result − # Get the Intercept and coefficients as vector elements.Ĭat("# The Coefficient Values # ","\n") Model <- lm(mpg~disp hp wt, data = input) We create a subset of these variables from the mtcars data set for this purpose. The goal of the model is to establish the relationship between "mpg" as a response variable with "disp","hp" and "wt" as predictor variables. It gives a comparison between different car models in terms of mileage per gallon (mpg), cylinder displacement("disp"), horse power("hp"), weight of the car("wt") and some more parameters. The basic syntax for lm() function in multiple regression is −įormula is a symbol presenting the relation between the response variable and predictor variables.ĭata is the vector on which the formula will be applied.Ĭonsider the data set "mtcars" available in the R environment.

This function creates the relationship model between the predictor and the response variable. Next we can predict the value of the response variable for a given set of predictor variables using these coefficients. The model determines the value of the coefficients using the input data. We create the regression model using the lm() function in R.

The general mathematical equation for multiple regression is −įollowing is the description of the parameters used − In simple linear relation we have one predictor and one response variable, but in multiple regression we have more than one predictor variable and one response variable. Multiple regression is an extension of linear regression into relationship between more than two variables.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed